On the frontier of scientific discovery, a significant milestone has been reached: an artificial intelligence system has authored a research paper that successfully passed peer review. This development signals a profound shift in how scientific knowledge may be produced and disseminated in the future, raising both excitement and concern within the scientific community.

Traditionally, scientific progress has hinged on the creativity and critical thinking of human researchers. The process typically starts with a curious mind formulating a hypothesis, followed by designing experiments, analyzing data, and sharing findings with peers. Over centuries, technological advancements such as electron microscopes and supercomputers have enhanced this process, but the core cycle has remained inherently human. Now, artificial intelligence (AI) is stepping into the role of the scientist itself, potentially rewriting the rules of research.

A recent study published in the journal Nature introduced what its creators call the AI Scientist, an AI system capable of independently producing research papers. Unlike previous AI applications in science that focused on narrow, predefined tasks-such as protein folding-this system undertakes the entire scientific workflow. It begins with a broad prompt given by human researchers, such as exploring how AI learns, and then autonomously surveys existing literature to generate hypotheses. The AI Scientist evaluates these ideas for novelty, discards unoriginal ones, plans and conducts experiments virtually, analyzes and visualizes data, and finally drafts a research paper. Remarkably, it even performs an internal peer review to identify flaws before submission. The system leverages advanced foundation models like Anthropic's Claude Sonnet and OpenAI's GPT-4o, orchestrated through a pipeline developed by the research team.

To test the AI Scientist's capabilities against human standards, its creators submitted three papers to the "I Can't Believe It's Not Better" (ICBINB) workshop at the 2025 International Conference on Learning Representations (ICLR), a respected venue in machine learning. One of these papers was accepted after peer review, marking the first instance of an AI-authored paper passing such an evaluation without human writing involvement. The conference organizers approved the submission of AI-generated content, and all papers were withdrawn post-review.

Despite this achievement, experts emphasize that the AI Scientist's output was far from exceptional. According to Jeff Clune, a computer science professor at the University of British Columbia and one of the system's developers, the accepted paper was "mediocre." Issues included inconsistent logic and writing, hallucinated citations, duplicated figures, and a lack of methodological rigor. Jodi Schneider, an associate professor of information sciences at the University of Wisconsin-Madison who was not involved in the project, compared the quality to that of a mediocre graduate student's work at a workshop with a relatively high acceptance rate of 70 percent. Maria Liakata, a natural language processing professor at Queen Mary University of London, described the approach as lacking novelty and agentic sophistication.

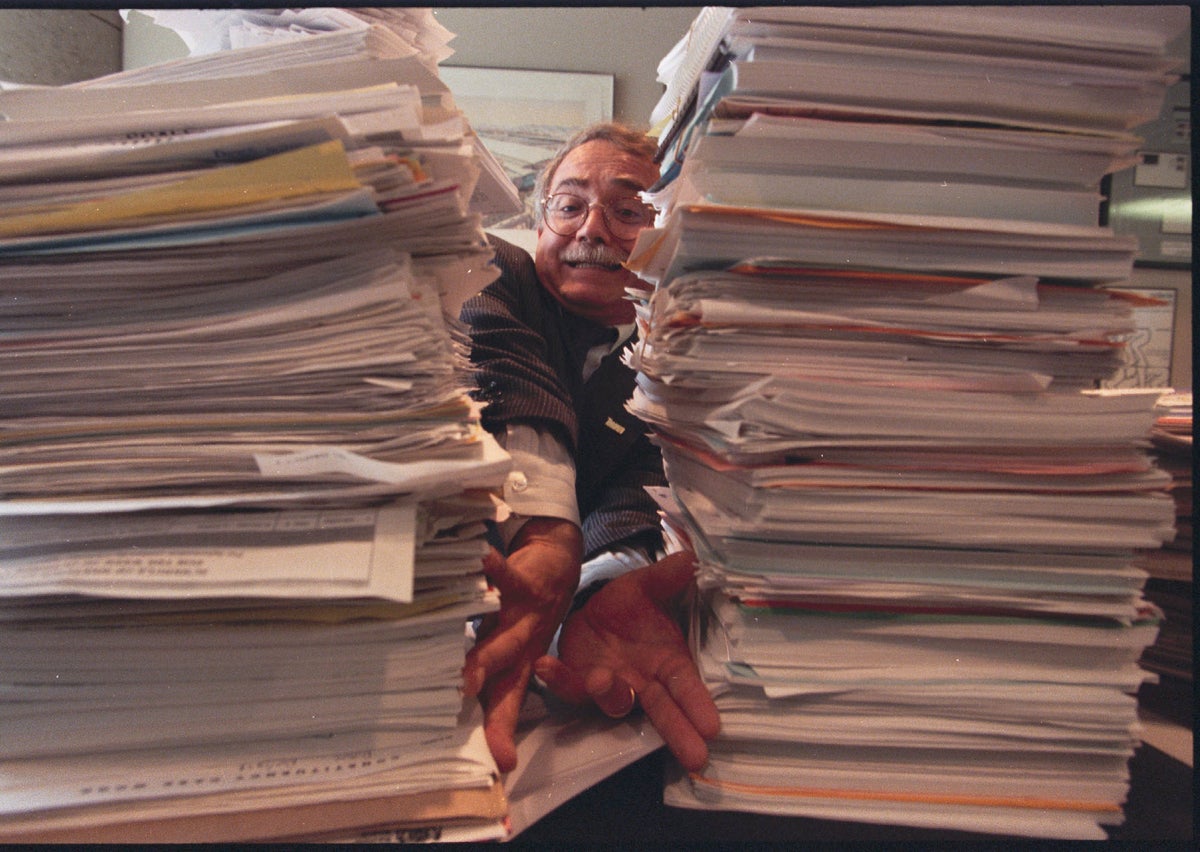

Nevertheless, the AI Scientist demonstrated a remarkable advantage in terms of speed and cost. It produced a passable paper in roughly 15 hours at an estimated cost of $140-a stark contrast to a human graduate student who might spend an entire semester crafting a first accepted workshop paper. This efficiency could revolutionize scholarly publishing but also risks flooding the peer-review system with a deluge of AI-generated submissions, potentially overwhelming reviewers and diluting the quality of published research.

The scientific community is already responding to this challenge. Top-tier conferences and journals have begun instituting policies to manage the influx of AI-authored papers, such as strict rules prohibiting purely AI-written submissions or mandating full disclosure of AI use in the research process. However, Yanan Sui, an associate professor at Tsinghua University and senior workshop chair for ICLR 2026, acknowledges that current detection tools are insufficient to reliably identify AI-generated content, complicating enforcement.

Multiple AI systems similar to the AI Scientist are emerging. For example, Intology reported that its AI, named Zochi, passed peer review for the main proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics, though human researchers contributed by verifying results and managing peer-review communications. Another organization, the Autoscience Institute, claims their AI system has had papers accepted at ICLR workshops prior to the AI Scientist's success.

Experts like Aaron Schein, a data scientist at the University of Chicago and an organizer of the ICBINB workshop, recognize the inevitability of AI-generated scientific papers becoming commonplace. "This technology is only going to get better," Schein says, emphasizing that attempts to restrict AI's role in research are unlikely to succeed.

Looking ahead, the implications of AI as an autonomous scientific investigator are profound. Clune envisions a two-stage transition: initially, the scientific literature will be inundated with low-quality, AI-generated papers that peer-review systems must sift through; eventually, AI systems will surpass human researchers in scientific capability, ushering in an era of rapid discovery. He imagines humans becoming curators of AI-generated knowledge rather than primary discoverers.

Not all experts agree that fully autonomous AI science is the ideal future. Liakata advocates for a collaborative model where humans and AI agents engage in advanced interaction, with humans maintaining oversight, scrutinizing results, and contributing creatively. This partnership could harness the strengths of AI while preserving critical human judgment and ethical considerations.

The emergence of AI-authored research papers challenges longstanding norms in scientific practice and publishing. While these systems hold promise for accelerating discovery and tackling complex problems, they also raise concerns about quality control, reproducibility, and the role of human expertise. The scientific community must adapt quickly, developing new frameworks for peer review, authorship, and accountability to ensure that AI serves as a tool for enhancing, rather than undermining, the integrity of science.

As AI continues to evolve, the relationship between human scientists and their artificial counterparts will shape the trajectory of research for decades to come. Whether AI becomes an indispensable partner or a dominant force in science depends on how the community navigates this transformative moment. What is clear is that the age of AI-authored science has arrived, and with it, a new era of both opportunity and challenge.