In a remarkable leap forward towards understanding the human mind, researchers have developed a novel technique that can translate brain activity into descriptive sentences, effectively generating captions for what a person is seeing or imagining. This groundbreaking approach, termed “mind captioning,” leverages non-invasive brain imaging and artificial intelligence (AI) to decode the visual experiences encoded in our neural activity, offering unprecedented insight into how the brain interprets the world.

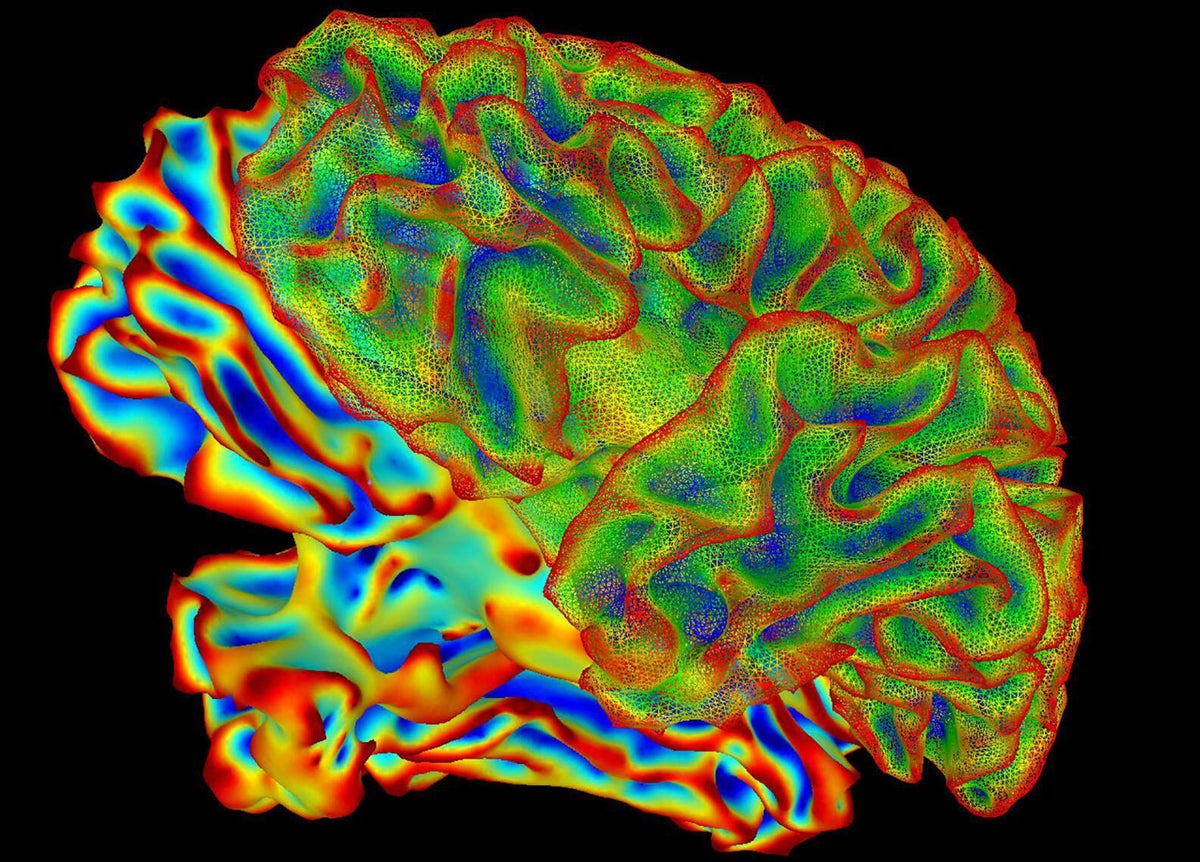

The study, recently published in the journal *Science Advances*, showcases a method that can capture and describe visual scenes from brain scans with impressive detail and accuracy. Using functional magnetic resonance imaging (fMRI), a non-invasive technology that measures brain activity by detecting changes in blood flow, researchers recorded the neural responses of participants as they watched videos and later recalled them from memory. By analyzing these brain activity patterns, the AI-driven model could generate natural language sentences that closely matched the content of the viewed or remembered scenes.

Alex Huth, a computational neuroscientist at the University of California, Berkeley, who was not involved in the study, expressed admiration for the model’s capabilities, stating that it predicts what a person is looking at “with a lot of detail,” a feat previously thought to be extremely challenging. The ability to capture such nuanced information from brain activity marks a significant advancement beyond earlier efforts that primarily identified isolated keywords rather than full contextual descriptions.

Decoding brain activity to interpret sensory experiences has been a focus of neuroscience for over a decade. Prior research successfully predicted simple elements of viewed or heard stimuli, such as words or objects, from brain scans. However, comprehending complex and dynamic content—like short videos or abstract imagery—proved much more difficult. Earlier methods often relied on AI systems that generated sentence structures independently, making it unclear whether the descriptions truly reflected the brain’s representation or were influenced by the AI’s own biases.

Addressing these challenges, the new approach was developed by a team led by Tomoyasu Horikawa at NTT Communication Science Laboratories in Kanagawa, Japan. Their method employed a two-step process that combined advanced AI models with brain imaging data. First, a deep language AI analyzed textual captions from over 2,000 videos, converting each into a unique numerical “meaning signature” that captured the semantic essence of the content. Separately, the researchers trained an AI to identify patterns in the fMRI brain scans of six participants as they watched these videos, learning to associate specific neural activity patterns with corresponding meaning signatures.

Once trained, this brain decoder could interpret new brain scans from participants viewing unfamiliar videos and predict the associated meaning signatures. A second AI text generator then searched for sentences that best matched these predicted signatures, effectively translating brain activity into human-readable descriptions. For instance, when a participant watched a video of a person jumping from the top of a waterfall, the model’s guesses evolved from simple phrases like “spring flow” to more detailed and accurate descriptions such as “a person jumps over a deep waterfall on a mountain ridge” after multiple iterations.

Importantly, the researchers also tested the system’s ability to interpret mental imagery. Participants were asked to recall video clips they had previously seen, and the AI successfully generated descriptions of these memories based solely on brain activity during recall. This finding suggests that the brain uses similar representational patterns for both perceiving and remembering visual scenes, further underscoring the model’s ability to tap into the brain’s internal narrative.

Beyond its scientific fascination, this technology holds promising practical applications. Because it uses non-invasive fMRI, the approach could inform the development of brain–computer interfaces designed to assist individuals with communication difficulties, such as stroke survivors who struggle with language production. By decoding non-verbal mental representations directly into text, these systems could provide a new means for people to express their thoughts and experiences without relying on speech or movement.

However, the advancement also raises important ethical considerations regarding mental privacy. As AI models grow ever more adept at revealing intimate thoughts, emotions, and potentially sensitive health information from brain data, concerns about misuse for surveillance, manipulation, or discrimination naturally arise. Both Horikawa and Huth emphasize that current models require explicit participant consent and are not capable of reading private or unrelated thoughts. “Nobody has shown you can do that, yet,” Huth cautions, underscoring the need for careful regulation and ethical guidelines as the technology progresses.

This pioneering research builds on a growing body of work striving to bridge the gap between brain activity and human language, bringing us closer to a future where thoughts and